Archive for geospatial

Libraries Rock

-

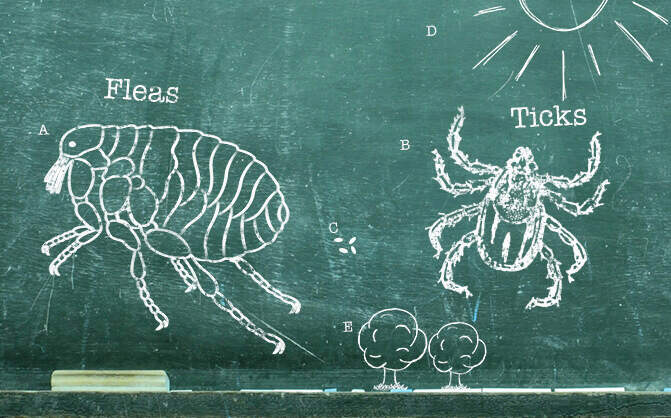

Ticks

If you have pets, you probably know a thing or two about ticks, but maybe you’re wondering how to prevent or get rid of ticks in your yard or on your pet.

-

How Many Fleas On A Dog Is Considered An Infestation?

How many fleas on your dog is an infestation? Learn how to spot fleas, how many is too many, and how to deal with them.

-

Ants

Are ants wreaking havoc in your home? Find out how to get rid of ants (and carpenter ants) and how to prevent troublesome ant infestations in the first place.

-

Symptoms Of Lyme Disease In Dogs

Lyme disease can be a serious illness in dogs. Here’s an inside look at what causes it and how you can prevent it.

-

Mosquitoes

Plagued by mosquitoes? Wondering how to get rid of them? Should you spray? What if mosquitoes are in your house? Here are handy tips for mosquito control.

-

Flea & Tick Season 101

Fleas and ticks can be a problem for pets all year long, even outside of the peak season. Learn all about their life cycles and how to protect against them. Read more about Petfriendly purrs advance.

-

Autumn Outdoor Activities For Dogs

Half the fun of having dogs in our lives is sharing various activities with them, including play dates at the dog park, camping, and hiking. Most dogs have lots of physical and mental energy—even saying the word “outside” can send them into a tail-wagging tizzy—so you’ll want to channel that energy into safe, fun activities you can both enjoy.

-

High Risk Flea Areas – Fleas In House

Learn where fleas are commonly found and how to prevent them from traveling into your house on your pet.

-

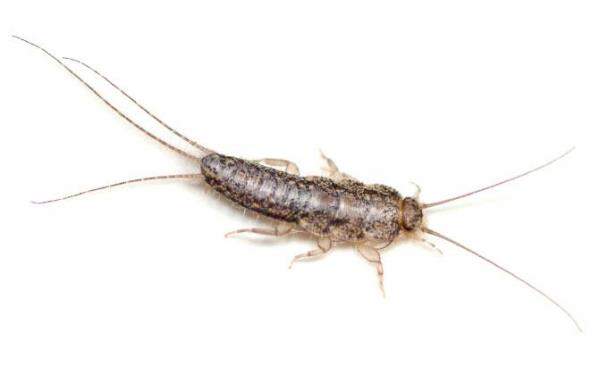

Silverfish

Silverfish can be a nuisance around the house, so if you’re wondering where they come from and how to get rid of these pesky insects, check out these silverfish facts and tips.

Python, KML and Parishes

When looking for phone numbers for various churches, I thought wouldn’t it be neat to put the locations on a map?

Python to the rescue. After some scraping to generate a CSV listing, I geocoded the addresses, then I used the OGR to convert into a KML document, using the same approach I previously blogged about. Nice!

Looking deeper, I wanted to have more exhaustive content within the <description> element. Turns out ogr2ogr has a -dsco DescriptionField=fieldname option, but I wanted more than that. So I decided to hack the original CSV:

#!/usr/bin/python

import csv

import urllib2

import urllib

from lxml import etree

r = csv.reader(open('greek_churches_canada.csv'))

node = etree.Element('kml', nsmap={None: 'http://www.opengis.net/kml/2.2'})

r.next()

for row in r:

params = '%s,%s,%s,%s' % (row[1], row[2], row[3], row[4])

url = 'http://maps.google.com/maps/geo?output=csv&q=%s' % urllib.quote_plus(params)

#print url

content = urllib2.urlopen(url)

status, accuracy, lat, lon = content.read().split(',')

#print status, accuracy, lat, lon

if status == '200':

row.append(lat)

row.append(lon)

subnode = etree.Element('Placemark')

subsubnode = etree.Element('name')

subsubnode.text = row[0]

subsubnode2 = etree.Element('description')

description = '<p>'

description += '%s<br/>' % row[1]

description += '%s, %s<br/>' % (row[2], row[3])

description += '%s<br/>' % row[4]

if row[5] != 'none':

description += '%s<br/>' % row[5]

if row[6] != 'none':

description += '%s<br/>' % row[6]

if row[7] != 'none':

description += '<a href="%s">Website</a><br/>' % row[7]

if row[8] != 'none':

description += '<a href="mailto:%s">Email</a><br/>' % row[8]

description += '%s<br/>' % row[9]

description += '</p>'

subsubnode2.text = etree.CDATA(description)

subsubnode3 = etree.Element('Point')

subsubsubnode = etree.Element('coordinates')

subsubsubnode.text = '%s, %s' %(lon, lat)

subsubnode3.append(subsubsubnode)

subnode.append(subsubnode)

subnode.append(subsubnode2)

subnode.append(subsubnode3)

node.append(subnode)

print etree.tostring(node, xml_declaration=True, encoding='UTF-8', pretty_print=True)

I wonder whether an OGR -dsco DescriptionTemplate=foo.txt, where foo.txt would look like:

<table>

<tr>

<th>City</th>

<td>[city]</td>

<th>Province</th>

<td>[province]</td>

</tr>

</table>

Or anything the user specified, for that matter. Then OGR would then use for each feature’s <description> element.

Anyways, here’s the resulting map. Cool!

Friday Metadata Thoughts

Not the most exciting topic, but I’ve found myself knee deep in metadata standards as they pertain to CSW in the last couple of weeks.

I’ve made some recommendations in the past for OWS metadata, which have helped in established publishing requirements for cataloguing.

Starting to look at ISO metadata (data, service) makes you quickly realize the pros and cons which come with making a standard flexible and exhaustive. Let’s take 19139; almost everything in the schema is optional. I think this is where profiles (such as ISO North American Profile) start to become especially important.

19119 is in the same boat. Aside: then you start to wonder about the overlap between 19119 and OWS Capabilities metadata. Wouldn’t it be nice if GetCapabiilties returned a 19119 document instead? Which could plop nicely in a CSW query response as well. Oh wait, it already does. But then try to validate the document instance. You’ll find that OGC CSW and ISO use different versions of GML (follow the refs in the .xsd’s you’ll see them soon enough), yet apply them to the same namespace. So validation fails. Harmonization required!

Having said this, this is very complicated metadata which can be addressed by intelligent tools. Tools that:

- integrate with GIS systems which can automagically populate by:

- fetching spatial extents

- fetching reference system definitions

- establish hierarchy (this would be tough as it would be tied to the data management of the system)

- fetch contact information from a given user profile (how about getting this from the network’s email / global address book against the logged in user?)

Then again, what about keeping it simple and mainstream friendly? The toughest part is metadata creation, so let’s make it as easy as possible to do so!

How do your activities try to make metadata easier to create?

new stuff in OWSLib

I’ve been spending alot of time lately doing a CSW client library in python, which was committed today to OWSLib. CSW requests can be tricky to construct correctly, so this contribution attempts to provide an easy enough entry point to querying OGC Catalogues.

At this point, you can query your favourite CSW server with:

>>> from owslib import csw

>>> c = csw.request('http://example.org/csw')

>>> c.GetCapabilities() # constructs XML request, in c.request

>>> c.fetch() # HTTP call. Result in c.response

>>> c.GetRecords('dataset','birds',[-152,42,-52,84]) # birds datasets in Canada

>>> c.fetch()

>>> c.GetRecords('service','frog') # look for services with frogs, anywhere

>>> c.fetch()

That’s pretty much all there is to it. There’s also support for DescribeRecord, GetRecordById and GetDomain for the adventurous.

I hope this will be a valuable addition. Because CSW uses Filter, I broke things out into a module per standard, so that other code can reuse, say, filter for request building and response parsing. A colleague is using this functionality to write a QGIS CSW search plugin.

My next goal will be to put in some response handling. This will be tricky given the various outputSchema’s a given CSW advertises. For now, I will concentrate on the default csw:Record (a glorified Dublin Core with ows:BoundingBox).

So try it out; comments, feedback and suggestions would be most valued.

Oh ya, thank you etree!

MapServer 5.4.0 released

Announced yesterday, this release closes 92 bugs, and adds some new goodies.

Next stop: MapServer 6.0

Creating sitemap files for GeoNetwork

Sitemaps are a valuable way to index your content for web crawlers. GeoNetwork is a great tool for metadata management and a portal environment for discovery. I wanted to push out all metadata resources out as a sitemap so that content can be found by web crawlers. Python to the rescue:

#!/usr/bin/python

import MySQLdb

# connect to db

db=MySQLdb.connection(host='127.0.0.1', user='foo',passwd='foo',db='geonetwork')

# print out XML header

print """<?xml version="1.0" encoding="UTF-8"?>

<urlset

xmlns="http://www.sitemaps.org/schemas/sitemap/0.9"

xmlns:geo="http://www.google.com/geo/schemas/sitemap/1.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.sitemaps.org/schemas/sitemap/0.9

http://www.sitemaps.org/schemas/sitemap/0.9/sitemap.xsd">""" # fetch all metadata

db.query("""select id, schemaId, changeDate from Metadata where isTemplate = 'n'""")

r = db.store_result() for row in r.fetch_row(0): # write out a url element

if row[1] == 'fgdc-std':

url = 'http://devgeo.cciw.ca/geonetwork/srv/en/fgdc.xml'

if row[1] == 'iso19139':

url = 'http://devgeo.cciw.ca/geonetwork/srv/en/iso19139.xml' print """ <url>

<loc>%s?id=%s</loc>

<lastmod>%s</lastmod>

<geo:geo>

<geo:format>%s</geo:format>

</geo:geo>

</url>""" % (url, row[0], row[2], row[1])

print '</urlset>'

Done! It would be great if this were an out-of-the-box feature of GeoNetwork.

MapServer Disaster: you have got to be kidding me

http://n2.nabble.com/FW%3A-MapServer-enhancements-refactoring-project-td2571268.html

I’m beyond words at this point.

fun with Shapelib

We have some existing C modules which do a bunch of data processing, and wanted the ability to spit out shapefiles on demand. Shapelib is a C library which allows for reading and writing shapefiles and dbf files. Thanks to the API docs, here’s a pared down version of how to write a new point shapefile (with, in this case, one record):

#include <stdio.h> #include <stdlib.h> #include <libshp/shapefil.h>

/* build with: gcc -O -Wall -ansi -pedantic -g -L/usr/local/lib -lshp foo.c */

int main() {

int i = 0;

double *x;

double *y;

SHPHandle hSHP;

SHPObject *oSHP;

DBFHandle hDBF;

x = malloc(sizeof(*x));

y = malloc(sizeof(*y));

/* create shapefile and dbf */

hSHP = SHPCreate("bar", SHPT_POINT);

hDBF = DBFCreate("bar");

DBFAddField(hDBF, "stationid", FTString, 25, 0);

/* add record */

x[0] = -75;

y[0] = 45;

oSHP = SHPCreateSimpleObject(SHPT_POINT, 1, x, y, NULL);

SHPWriteObject(hSHP, -1, oSHP);

DBFWriteStringAttribute(hDBF, 0, 0, "abcdef");

/* destroy */

SHPDestroyObject(oSHP);

/* close shapefile and dbf */

SHPClose(hSHP);

DBFClose(hDBF);

free(x);

free(y);

return 0;

}

Done!

Less Than 4 Hours

A benefit of open source.

< 4 hours. That’s how long it took to address a MapServer bug in WMS 1.3.0. Having been on the other side of these many times, it’s gratifying to bang out quick fixes as well.

Committing often 🙂

Modified: 12 March 2009 09:31:07 EST